Originally published on Medium – Apache Airflow publication.

Imagine you are a data engineer at a growing tech company, and one of your key responsibilities is to ensure that data from various sources is collected, transformed, and loaded into a central data warehouse for analysis. The complexity of managing these data pipelines increases as the volume of data grows, leading to frequent errors, missed deadlines, and a lot of manual intervention.

My Journey with Airflow

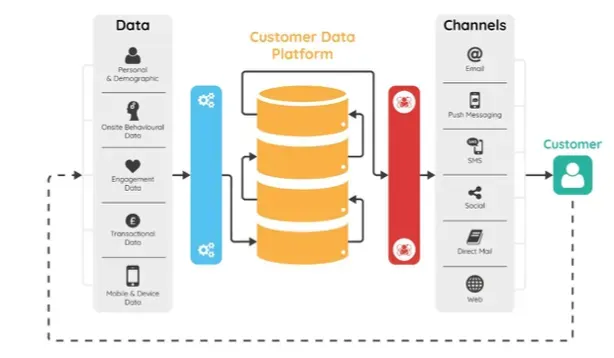

Back in 2019, I started working on a project to build a customer data platform (CDP) for a client. This involved processing massive amounts of data through various microservices. Initially, we used Talend, an ETL tool, but managing it became cumbersome. Even minor changes to the pipeline or data schema required rebuilding and redeploying the entire Talend job.

This led me to explore alternatives, and that’s when I came across Apache Airflow. I dug into how it worked and became convinced that it was a better solution for our needs. I presented my findings to my team, and they agreed. That’s how my journey with Airflow began!

Post this, I worked for 3–4 years and gained much knowledge and solved different use cases using Apache Airflow.

High Level Architecture Diagram

About Airflow

Apache Airflow is an open-source platform to programmatically author, schedule, and monitor workflows. Since its inception at Airbnb in 2014, Airflow has grown to become a widely adopted tool for orchestrating complex computational workflows and data processing pipelines. With its robust and extensible framework, Airflow is a top choice for data engineers and developers aiming to automate and manage their workflows.

Use Cases

Apart from the CDP use case above, Airflow solves a wide variety of problems:

-

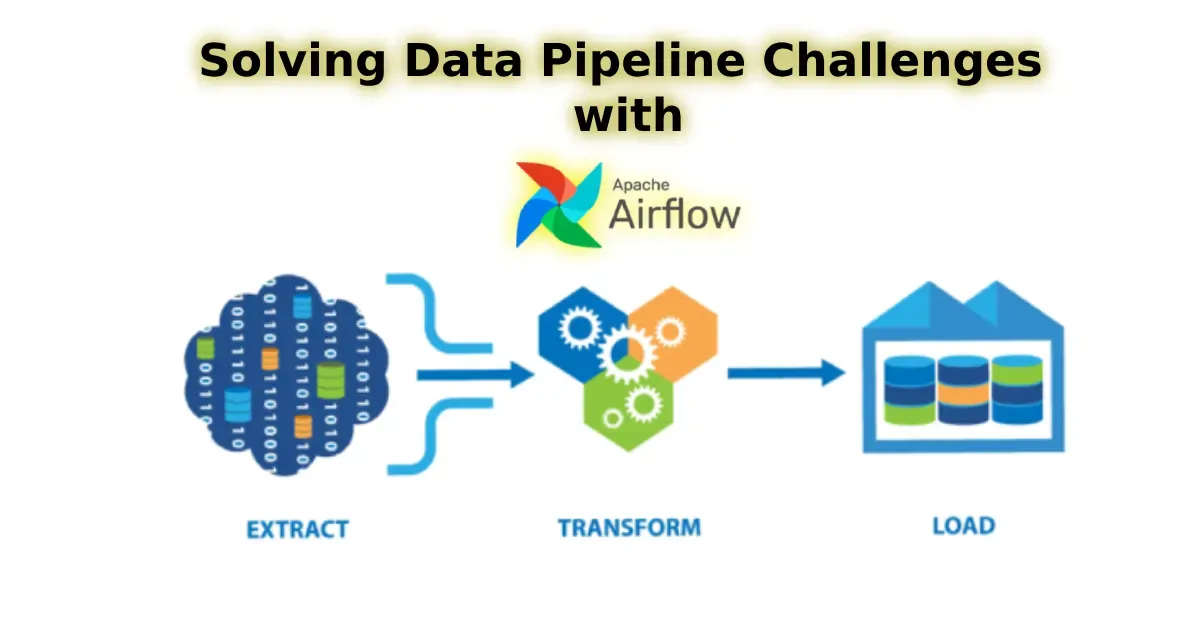

ETL Pipelines — Airflow handles ETL (Extract, Transform, Load) tasks with grace. It schedules and monitors these pipelines, ensuring your data remains consistent and reliable, just like a diligent librarian cataloging new books.

-

Data Warehousing — Airflow streamlines loading data into warehouses, performing necessary transformations along the way using built-in operators.

-

Machine Learning Pipelines — Airflow automates multiple steps like data preprocessing, model training, evaluation, and deployment, ensuring your ML workflows run smoothly and efficiently.

-

Data Processing — Airflow coordinates tasks such as data cleaning, validation, and aggregation, acting as a reliable assistant that keeps everything on track.

-

DevOps Automation — Airflow can automate tasks like running backups, monitoring system health, and deploying applications, making it invaluable for maintaining operational efficiency.

Airflow Pros 👍

-

Scalability 📈 — Airflow scales seamlessly, handling very large workflows and distributing tasks across multiple workers.

-

Flexibility 🤸 — With Python-based DAG definitions, you can easily customize your workflows to fit your unique requirements.

-

Extensibility 🧩 — Airflow’s built-in operators (Bash, Python, Kubernetes Pod Operators, and others) and the ability to create custom operators allow it to integrate with numerous external systems and APIs.

-

Community and Ecosystem 👫 — Being open source, Airflow benefits from a large and active community. This ensures continuous improvements and a wealth of plugins and integrations.

-

UI and Monitoring 📊 — The web interface provides a clear view of workflow status, logs, and task details, making debugging and monitoring a breeze.

Airflow Cons 👎

-

Complexity 🌀 — The learning curve can be steep, especially for those new to Python or workflow orchestration. Challenging but rewarding once mastered.

-

Resource Intensive ⚙️ — Airflow can consume significant system resources, particularly for large DAGs and high-frequency task execution. Managing these resources effectively is crucial.

-

Operational Overhead ⏳ — Maintaining an Airflow deployment, including managing the database and worker nodes, can be complex and require significant operational effort.

- Dependency Management 🧩 — Handling Python dependencies across different tasks can be challenging, often requiring careful management of virtual environments or containers.

Conclusion

It’s been almost 4–5 years since I started working on Airflow — from writing simple tasks to deploying it across GCP, Azure, and AWS. It’s been an amazing journey. Once you master this tool, it will be a game-changer for your data workflows.

Airflow is definitely worth exploring. My experience has been overwhelmingly positive, and it’s become a critical tool for our data engineering and workflow orchestration.